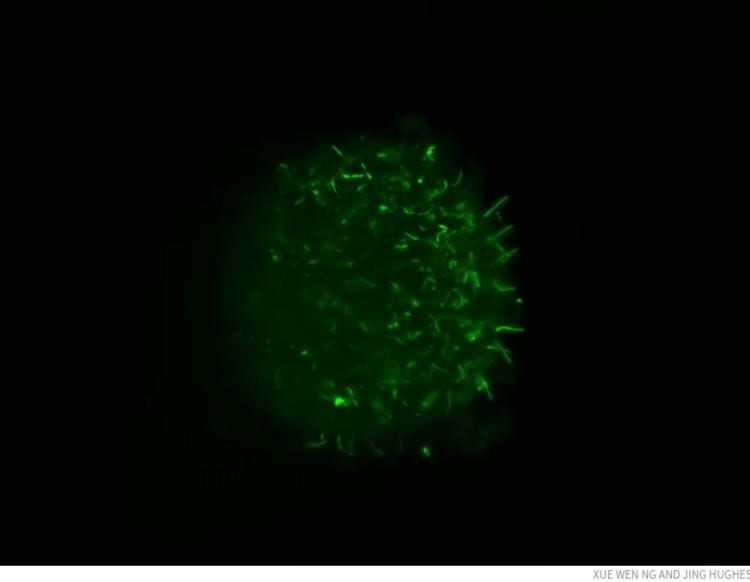

Light-sheet fluorescence microscopy, an imaging tool that can rapidly produce 3D images of complex cellular structures, gives scientists the power to visualize the myriad miniature dramas unfolding inside living cells and tissues. Scientists at Washington University School of Medicine in St. Louis are using the technique to watch, in astonishing detail, tiny organelles rearrange themselves inside cells, to monitor the stabilization of blood vessel walls in developing fish, and to map the network of filtration units in a human kidney. But high-resolution imaging generates reams of data, such that handling the enormous datasets has emerged as a chokepoint on the path to widespread adoption of the powerful imaging technique.

With the aid of a $1.2 million grant from the Arnold and Mabel Beckman Foundation, a team of researchers led by David W. Piston, PhD, the Edward Mallinckrodt Jr. Professor and head of the Department of Cell Biology & Physiology, is working to develop the computational tools necessary to analyze light-sheet microscopy data so scientists can take full advantage of its potential to help answer pressing biomedical questions.

“Conventional fluorescence microscopy is probably the most widely used tool in molecular biology,” said Piston, the grant’s principal investigator. “It’s an enormously powerful technique, but for 3D imaging, it’s slow. Light-sheet fluorescence microscopy brings the time to create a 3D image down from minutes to less than a second. That’s fast enough to create movies of biological processes in action.”

In conventional fluorescence microscopy, researchers label molecules or cells of interest with a fluorescent marker and then illuminate the sample with a laser beam to make the labeled structures fluoresce. In light-sheet fluorescence microscopy, the sample is illuminated with a sheet of light instead of a focused beam. Using a plane allows researchers to snap pictures of the sample a layer at a time, instead of spot by spot. By rapidly scanning the sheet of light along the length of a sample, light-sheet microscopes can create multiple 3D images every second.

“You can imagine that if I can take a 3D picture a couple times a minute, at the end of a day I have a few 3D pictures. If I take several a second, by the end of the day I’ve filled up every computer disk we have,” Piston said. “Transferring that amount of data from place to place takes forever. And once you have it, what do you do with it? You can’t look at it as is. You need to curate it, compress it, make it simpler to view.”

Co-principal investigator Timothy Holy, PhD, the Alan A. and Edith L. Wolff Professor of Neuroscience, and Ulugbek Kamilov, PhD, an assistant professor of electrical and systems engineering at Washington University’s McKelvey School of Engineering, aim to create an integrated software pipeline that will take in big data and turn out a manageable dataset ready for analysis. The software, which is being written in Julia, a programming language that Holy helped build, will create easily analyzable datasets by combining all available measurements and then using deep-learning techniques to identify and classify the most biologically relevant parts.

The software will be validated and refined by applying it to light-sheet microscopy data generated as part of ongoing scientific studies. A key step will be to prepare the 3D data for use in visualization software.

“I can make as many plots and graphs and bar graphs as I want, and people will say, ‘I don’t quite get it.’ But you show them one movie and it’s ‘Oh, I see exactly what you’re talking about’,” Piston said. “Our visualization programs were not built for science; they were built for movies like “Jurassic Park” and “Star Wars.” They can’t take these huge datasets in. We have to reduce them before we can use the programs.”

Co-principal investigator James Fitzpatrick, PhD, a professor of neuroscience and of cell biology & physiology, and director of the Washington University Center for Cellular Imaging (WUCCI), leads the second arm of the project: capacity building. He and his team will work to optimize the data collection workflows, as well as train and educate faculty, staff and students on light-sheet microscopy.